The Generative AI Server Market is rapidly emerging as a cornerstone of modern digital infrastructure, driven by the explosive adoption of artificial intelligence (AI) across industries. As enterprises increasingly deploy generative AI models for applications such as content generation, automation, and advanced analytics, the demand for high-performance computing infrastructure continues to surge.

According to insights from MarketsandMarkets,is expected to reach USD 448.60 billion by 2030 from USD 103.92 billion in 2025, registering a CAGR of 34.0% during the forecast period. This growth is fueled by advancements in AI algorithms, the proliferation of large language models, and increasing investments in hyperscale data centers.

Market Overview

Generative AI servers are specialized computing systems designed to handle the intense processing requirements of AI workloads, including training complex models and running inference tasks. These servers are optimized for parallel processing, high memory bandwidth, and efficient thermal management, making them essential for deploying next-generation AI applications.

With industries such as healthcare, finance, retail, and manufacturing embracing AI-driven transformation, generative AI servers are becoming indispensable for enabling real-time intelligence and scalable AI operations.

Market Segmentation Analysis

By Processor Type

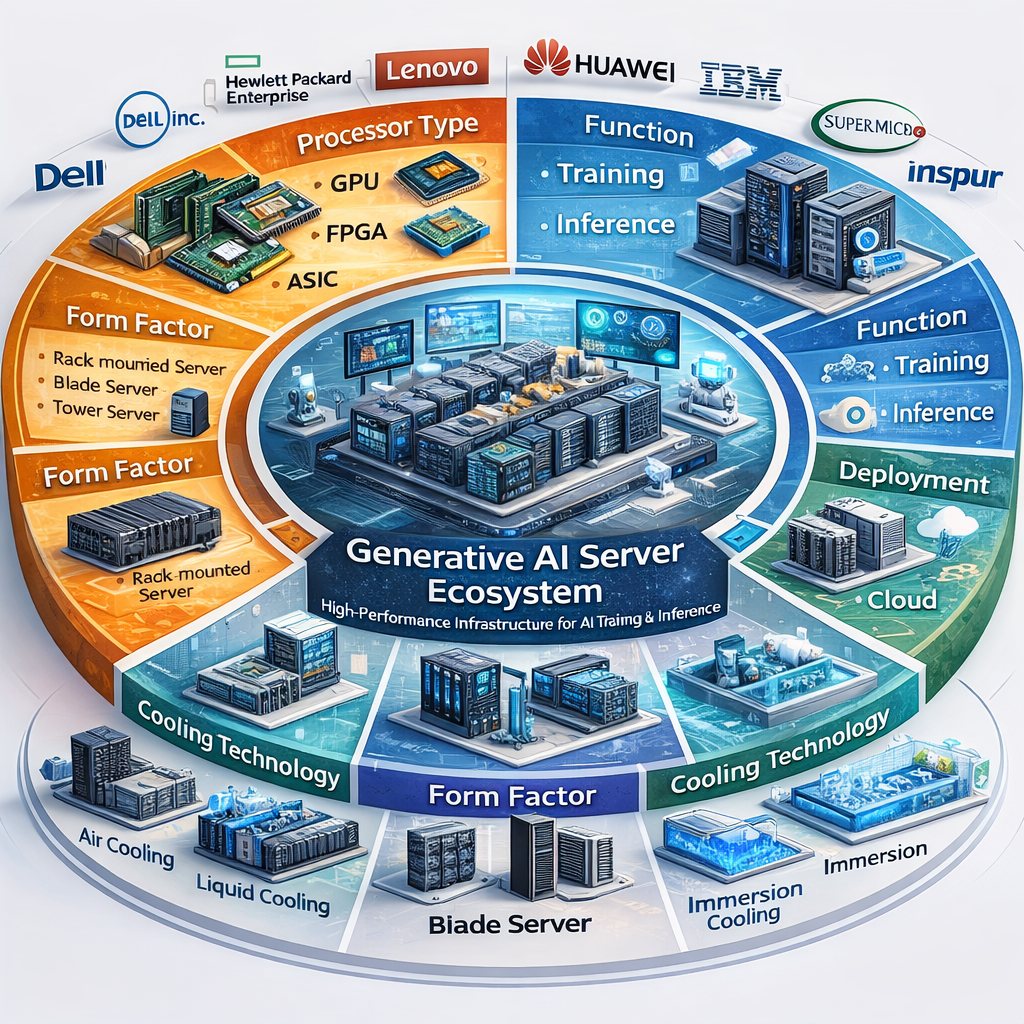

The generative AI server market is segmented into:

GPU (Graphics Processing Unit):

GPUs dominate the market due to their superior parallel processing capabilities, making them ideal for training large-scale AI models and handling complex computations.

FPGA (Field-Programmable Gate Array):

FPGAs offer flexibility and lower latency, making them suitable for customized AI workloads and real-time processing applications.

ASIC (Application-Specific Integrated Circuit):

ASICs are gaining traction as they provide high efficiency and performance for specific AI tasks, particularly in inference workloads where energy efficiency is critical.

Among these, GPU-based servers hold the largest market share, while ASICs are expected to witness significant growth due to increasing demand for specialized AI hardware.

By Function

Training:

Training involves processing vast datasets to build AI models. This segment requires high computational power and remains a major contributor to market revenue.

Inference:

Inference is the process of deploying trained models to generate outputs in real time. This segment is growing rapidly as AI applications scale across industries.

While training drives initial adoption, inference is emerging as the fastest-growing segment, driven by real-time AI applications such as chatbots, recommendation engines, and autonomous systems.

By Form Factor

Rack-mounted Servers:

These servers dominate the market due to their scalability, high density, and suitability for data center environments.

Blade Servers:

Blade servers offer compact design and efficient resource utilization, making them ideal for space-constrained environments.

Tower Servers:

Tower servers are used in smaller deployments or edge environments where large-scale infrastructure is not required.

Among these, rack-mountedhttps://www.marketsandmarkets.com/Market-Reports/generative-ai-server-market-242200223.html servers hold the largest share, driven by their widespread use in hyperscale data centers.

Download PDF Brochure @ https://www.marketsandmarkets.com/pdfdownloadNew.asp?id=242200223

By Deployment

On-premises:

On-premises deployment provides greater control, security, and compliance, making it suitable for industries handling sensitive data.

Cloud:

Cloud deployment is gaining dominance due to its scalability, flexibility, and cost efficiency. It allows organizations to access AI capabilities without heavy upfront investments.

The cloud segment is expected to witness the highest growth, driven by increasing adoption of AI-as-a-service platforms and cloud-based AI infrastructure.

By Cooling Technology

Air Cooling:

Traditional cooling method, widely used but increasingly challenged by high power densities.

Liquid Cooling:

Emerging as a preferred solution for generative AI servers due to its superior thermal efficiency and ability to handle high-performance workloads.

As AI workloads intensify, liquid cooling is becoming critical for maintaining performance and reducing energy consumption in data centers.

Regional Insights

North America:

Leads the market due to strong presence of hyperscalers, advanced AI ecosystem, and significant investments in data center infrastructure.

Europe:

Witnessing steady growth driven by digital transformation initiatives and increasing AI adoption across industries.

Asia Pacific:

Expected to grow at the highest rate due to rapid industrialization, government initiatives, and expansion of cloud infrastructure in countries like China, India, and Japan.

Key Market Drivers

1. Explosion of Generative AI Applications

The rise of generative AI tools for content creation, coding, and automation is significantly increasing demand for high-performance servers.

2. Growth of Hyperscale Data Centers

Major cloud providers are investing heavily in AI infrastructure to support large-scale AI workloads.

3. Advancements in AI Hardware

Continuous innovation in GPUs, ASICs, and specialized processors is enhancing performance and efficiency.

4. Increasing Demand for Real-Time AI Processing

Applications such as virtual assistants, recommendation systems, and autonomous technologies require low-latency inference capabilities.

Challenges in the Market

High Infrastructure Costs:

Generative AI servers require significant capital investment, especially for large-scale deployments.

Energy Consumption:

AI workloads consume substantial power, increasing operational costs and environmental concerns.

Thermal Management Issues:

High-density computing environments demand advanced cooling solutions to maintain efficiency.

Skill Gap:

Deploying and managing AI infrastructure requires specialized expertise, which is currently limited.

Competitive Landscape

The generative AI server market features a mix of global technology leaders and specialized hardware providers, including:

- NVIDIA Corporation

- Advanced Micro Devices (AMD)

- Intel Corporation

- IBM Corporation

- Dell Technologies

- Hewlett Packard Enterprise (HPE)

- Lenovo Group Limited

- Super Micro Computer, Inc.

- Cisco Systems, Inc.

These companies are focusing on AI-optimized hardware, strategic partnerships, and innovation in cooling and deployment technologies to strengthen their market position.

Future Outlook

The future of the generative AI server market looks highly promising, with continuous advancements in AI models and increasing enterprise adoption driving demand. As organizations prioritize digital transformation and data-driven decision-making, generative AI servers will play a pivotal role in enabling scalable and efficient AI operations.

The integration of edge computing, AI acceleration, and energy-efficient architectures is expected to further transform the market landscape, making AI more accessible and sustainable.

The Generative AI Server Market is set to redefine the computing infrastructure landscape, enabling organizations to harness the full potential of AI. With strong growth projections, evolving technologies, and expanding applications, the market presents significant opportunities for innovation and investment.

As businesses continue to adopt AI-driven solutions, the need for high-performance, scalable, and energy-efficient server infrastructure will only intensify — positioning generative AI servers at the heart of the digital future.

FAQ

1. What is a generative AI server?

A generative AI server is a high-performance computing system designed to handle intensive AI workloads such as training large models and running real-time inference for applications like chatbots, content generation, and automation.

2. Which processor type is most widely used in generative AI servers?

GPU-based servers are the most widely used due to their strong parallel processing capabilities, while FPGA and ASIC processors are gaining traction for specialized and energy-efficient workloads.

3. What is the difference between training and inference in generative AI servers?

Training involves building AI models using large datasets and requires high computational power, whereas inference refers to deploying trained models to generate outputs in real time and is growing rapidly with AI adoption.

4. Why is cloud deployment popular in the generative AI server market?

Cloud deployment is popular because it offers scalability, flexibility, and cost efficiency, allowing organizations to access powerful AI infrastructure without heavy upfront investments.

5. What role does cooling technology play in generative AI servers?

Cooling technology is critical for maintaining performance and efficiency, with liquid cooling emerging as a preferred solution due to its ability to handle high power densities and reduce energy consumption in advanced AI workloads.

About MarketsandMarkets™

MarketsandMarkets™ has been recognized as one of America’s Best Management Consulting Firms by Forbes, as per their recent report.

MarketsandMarkets™ is a blue ocean alternative in growth consulting and program management, leveraging a man-machine offering to drive supernormal growth for progressive organizations in the B2B space. With the widest lens on emerging technologies, we are proficient in co-creating supernormal growth for clients across the globe.

Today, 80% of Fortune 2000 companies rely on MarketsandMarkets, and 90 of the top 100 companies in each sector trust us to accelerate their revenue growth. With a global clientele of over 13,000 organizations, we help businesses thrive in a disruptive ecosystem.

The B2B economy is witnessing the emergence of $25 trillion in new revenue streams that are replacing existing ones within this decade. We work with clients on growth programs, helping them monetize this $25 trillion opportunity through our service lines – TAM Expansion, Go-to-Market (GTM) Strategy to Execution, Market Share Gain, Account Enablement, and Thought Leadership Marketing.

Built on the ‘GIVE Growth’ principle, we collaborate with several Forbes Global 2000 B2B companies to keep them future-ready. Our insights and strategies are powered by industry experts, cutting-edge AI, and our Market Intelligence Cloud, KnowledgeStore™, which integrates research and provides ecosystem-wide visibility into revenue shifts.

To find out more, visit www.MarketsandMarkets™.com or follow us on Twitter , LinkedIn and Facebook .

Contact:

Mr. Rohan Salgarkar

MarketsandMarkets™ INC.

1615 South Congress Ave.

Suite 103, Delray Beach, FL 33445

USA: +1-888-600-6441