The global generative AI server market is entering a phase of explosive growth, fueled by an unprecedented surge in demand for high-performance computing (HPC). As organizations race to deploy advanced AI models—particularly large language models (LLMs) and generative applications—the need for powerful, scalable, and efficient server infrastructure has become mission-critical.

According to MarketsandMarkets, the generative AI server market was valued at approximately USD 71.7 billion in 2024 and is projected to reach USD 448.6 billion by 2030, expanding at a remarkable CAGR of 34%. This rapid expansion underscores how foundational high-performance computing has become in the AI-driven economy.

The HPC Boom Behind Generative AI Growth

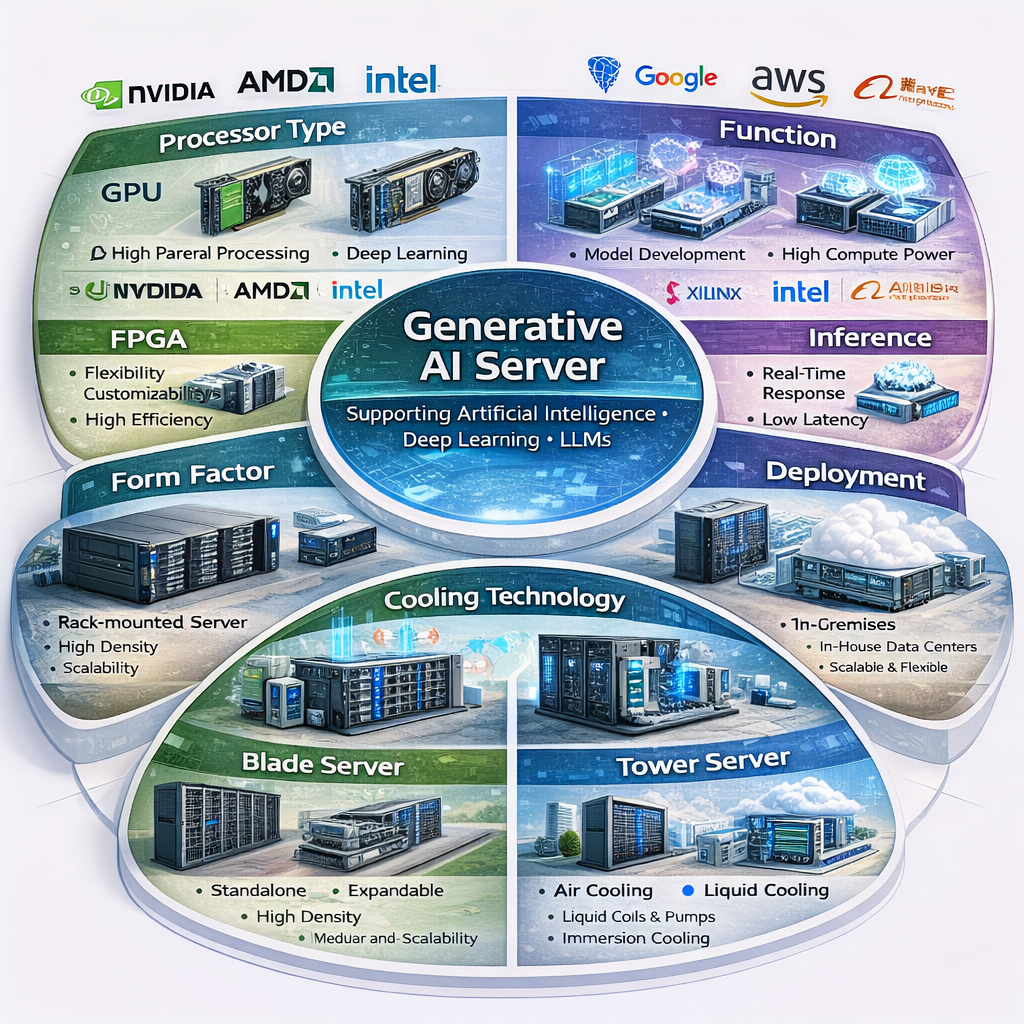

At the heart of this surge lies the computational intensity of generative AI. Applications such as text generation, image synthesis, video creation, and code development require massive processing power and real-time data handling. These workloads are far more demanding than traditional enterprise computing, pushing organizations toward specialized AI servers equipped with advanced GPUs, ASICs, and high-bandwidth memory.

Unlike conventional IT infrastructure, generative AI systems must support both large-scale model training and continuous inference. While training is resource-intensive, inference—running AI models in real-world applications—requires low latency and high throughput at scale, further accelerating demand for optimized HPC systems.

GPU Dominance and Specialized Hardware Innovation

One of the most defining trends in the generative AI server market is the dominance of GPU-based architectures. GPUs account for the largest share of the market due to their superior parallel processing capabilities, making them ideal for handling complex AI workloads.

At the same time, innovation is accelerating in custom AI chips and accelerators. Companies are increasingly adopting ASICs and FPGAs to optimize performance, reduce latency, and improve energy efficiency. This shift toward specialized hardware is reshaping server design and enabling faster, more cost-effective AI deployments.

Download PDF Brochure @ https://www.marketsandmarkets.com/pdfdownloadNew.asp?id=242200223

Hyperscale Data Centers Driving Infrastructure Expansion

The rapid rise of generative AI is closely tied to the expansion of hyperscale data centers. Cloud providers and large enterprises are investing heavily in AI-ready infrastructure to support growing workloads. These facilities are designed to house high-density servers capable of handling massive computational demands.

Cloud deployment remains the dominant model, allowing organizations to access scalable AI infrastructure without heavy upfront investments. At the same time, edge computing is gaining traction, enabling faster data processing closer to the source and supporting real-time AI applications.

Inference Workloads Redefining Market Dynamics

A key shift in the market is the transition from training-focused workloads to inference-driven demand. As generative AI applications move into production—powering chatbots, virtual assistants, recommendation engines, and content generation tools—the need for continuous inference processing is skyrocketing.

This evolution is fundamentally changing server requirements. Instead of sporadic high-intensity workloads, enterprises now require always-on infrastructure capable of handling billions of daily queries with minimal latency.

Cooling, Energy, and Sustainability Challenges

As compute density increases, so do power consumption and heat generation. Traditional air-cooling systems are no longer sufficient for modern AI servers. Liquid cooling technologies are emerging as a critical solution, offering better thermal management and improved energy efficiency.

However, sustainability remains a major concern. The energy demands of AI data centers are significant, prompting industry players to explore greener technologies and more efficient system designs.

Enterprise Adoption Expands Market Opportunities

While early adoption was driven by tech giants and hyperscalers, enterprises across industries are now embracing generative AI. Sectors such as healthcare, finance, retail, media, and manufacturing are integrating AI into their operations to enhance productivity, automate workflows, and drive innovation.

This broadening adoption is creating new revenue streams for server providers and accelerating demand for customized AI infrastructure tailored to specific business needs.

Regional Growth and Emerging Markets

Geographically, North America currently leads the market due to its advanced technological ecosystem and strong presence of cloud providers. However, the Asia-Pacific region is expected to witness the fastest growth, driven by government initiatives, expanding AI ecosystems, and increasing investments in digital infrastructure.

Countries like China, Japan, South Korea, and India are rapidly scaling AI capabilities, further boosting demand for generative AI servers.

Challenges: Cost, Regulation, and Talent Gaps

Despite strong growth, the market faces several challenges. High infrastructure costs remain a significant barrier, as deploying AI servers requires substantial investment in hardware, cooling systems, and energy resources.

Additionally, regulatory concerns around data privacy and sovereignty complicate global AI deployments. Organizations must navigate complex legal frameworks while ensuring compliance. Talent shortages in AI infrastructure design also pose a constraint on market expansion.

The generative AI server market is undergoing a transformative shift, driven by the explosive demand for high-performance computing. As generative AI continues to evolve from experimentation to large-scale deployment, the need for powerful, efficient, and scalable server infrastructure will only intensify.

From GPU dominance and specialized hardware innovation to hyperscale data center expansion and inference-driven workloads, the market is being reshaped at every level. While challenges around cost, energy, and regulation persist, the overall trajectory remains clear: generative AI servers are becoming the backbone of the next digital revolution.

Frequently Asked Questions (FAQs): Generative AI Server Market Trends

1. What is a generative AI server?

A generative AI server is a high-performance computing system specifically designed to handle the training and deployment (inference) of generative AI models such as large language models, image generators, and video synthesis tools. These servers typically use GPUs, AI accelerators, and high-speed memory to process massive datasets efficiently.

2. Why is demand for generative AI servers increasing so rapidly?

The surge is driven by the widespread adoption of generative AI across industries. Applications like chatbots, content creation, drug discovery, and autonomous systems require immense computational power, pushing organizations to invest in advanced server infrastructure.

3. What role does high-performance computing (HPC) play in this market?

High-performance computing is the backbone of generative AI. It enables fast processing of complex algorithms, supports large-scale model training, and ensures low-latency inference for real-time applications.

4. Which hardware components are most important in generative AI servers?

Key components include GPUs (for parallel processing), CPUs (for general tasks), high-bandwidth memory, storage systems, and specialized AI chips such as ASICs and FPGAs. GPUs currently dominate due to their efficiency in handling AI workloads.

5. What is the difference between training and inference in generative AI?

Training involves feeding large datasets into AI models to help them learn patterns, which requires massive computing power. Inference is the process of using trained models to generate outputs in real time, requiring speed and efficiency at scale.

6. How are cloud and edge computing impacting the market?

Cloud computing allows scalable and cost-effective access to AI infrastructure, making it the preferred deployment model. Edge computing is growing as it enables faster, real-time processing closer to the data source, reducing latency.

About MarketsandMarkets™

MarketsandMarkets™ has been recognized as one of America’s Best Management Consulting Firms by Forbes, as per their recent report.

MarketsandMarkets™ is a blue ocean alternative in growth consulting and program management, leveraging a man-machine offering to drive supernormal growth for progressive organizations in the B2B space. With the widest lens on emerging technologies, we are proficient in co-creating supernormal growth for clients across the globe.

Today, 80% of Fortune 2000 companies rely on MarketsandMarkets, and 90 of the top 100 companies in each sector trust us to accelerate their revenue growth. With a global clientele of over 13,000 organizations, we help businesses thrive in a disruptive ecosystem.

The B2B economy is witnessing the emergence of $25 trillion in new revenue streams that are replacing existing ones within this decade. We work with clients on growth programs, helping them monetize this $25 trillion opportunity through our service lines – TAM Expansion, Go-to-Market (GTM) Strategy to Execution, Market Share Gain, Account Enablement, and Thought Leadership Marketing.

Built on the ‘GIVE Growth’ principle, we collaborate with several Forbes Global 2000 B2B companies to keep them future-ready. Our insights and strategies are powered by industry experts, cutting-edge AI, and our Market Intelligence Cloud, KnowledgeStore™, which integrates research and provides ecosystem-wide visibility into revenue shifts.

To find out more, visit www.MarketsandMarkets™.com or follow us on Twitter , LinkedIn and Facebook .

Contact:

Mr. Rohan Salgarkar

MarketsandMarkets™ INC.

1615 South Congress Ave.

Suite 103, Delray Beach, FL 33445

USA: +1-888-600-6441